I recently delivered a short presentation called “I Think Therefore I Blog”. Whilst this does not not specifically have anything to do with software architecture, I hope it might provide some encouragement to colleagues and others out there in the blogosphere as to why blogging can be good for you and why it’s worth pursuing, sometimes in the face of no or very little feedback!

Reason #1: Blogging helps you think (and reflect)

The author Joan Didion once said, “I don’t know what I think until I try to write it down.” Amazon CEO Jeff Bezos preaches the value of writing long form prose to clarify thinking. Blogging, as a form of self expression (and I’m not talking about blogs that just post references to other material) forces you to think by writing down your arguments and assumptions. This is the single biggest reason to do it, and I think it alone makes it worth it.

You have a lot of opinions and I’m sure you hold some of them pretty strongly. Pick one and write it up in a post — I’m sure your opinion will change somewhat, or at least become more nuanced. Putting something down on ‘paper’ means a lot of the uncertainty and vagueness goes away leaving you to defend your position for yourself. Even if no one else reads or comments on your blog (and they often don’t) you still get the chance to clarify your thoughts in your own mind, and as you write, they become even clearer.

The more you blog, the better you become at writing for your audience, managing your arguments, defending your position, thinking critically. I find that if I don’t understand something very well and want to learn more about it, writing a blog post about that topic focuses my thinking and helps me learn it better.

Reason #2: Blogging enforces discipline

A blog is a broadcast, not a publication. It is not static. Like a shark, if it stops moving, it dies. If you want your blog to last and grow you need to write regularly, it therefore enforces some form of discipline on your life.

Although I don’t always achieve this I do find that writing a little, a lot is better than trying to write a whole post in one go. Start a post with an idea, write it down, then add to it as your thoughts develop, you’ll soon have something you are happy with and are ready to publish. The key thing is to start as soon as you have an idea, capture it straight away before you forget it then expand on it.

Reason #3: Blogging gives you wings

If you persist with blogging, you will discover that you develop new and creative ways to articulate what you want to say. As I write, I often search for alternative ways to express myself. This can be through images, quotes, a retelling of old experiences through stories, videos, audio, or useful hyperlinks to related web resources.

You have many ways to convey your ideas, and you are only limited by your own imagination. Try out new ways of communicating and take risks. Blogging is the platform that allows you to be creative.

Reason #4: Blogging creates personal momentum

Blogging puts you out there, for all the word to see, to be judged and criticized for both your words and how you structure them. It’s a bit intimidating, but I know the only way to become a better writer is to keep doing it.

Once you have started blogging, and you realise that you can actually do it, you will probably want to develop your skills further. Blogging can be time consuming, but the rewards are ultimately worth it. In my experience, I find myself breaking out of inertia to create some forward movement in my thinking, especially when I blog about topics that may be emotive, controversial, challenging. The more you blog, the better you become at writing for your audience, managing your arguments, defending your position, thinking critically. The photographer Henri Cartier-Bresson said “your first 10,000 photos are your worst”, a similar rule probably applies to blog posts!

I also believe blogging makes be better at my job. I can’t share my expertise or ideas if I don’t have any. My commitment to write 2-4 times per month keeps me motivated to experiment and discover new things that help me develop at work and personally.

Conversely, if I am not blogging regularly then I need to ask myself why that is. Is it because I’m not getting sufficient stimulus or ideas from what I am doing and if so what can I do to change that?

Reason #5: Blogging gives you (more) eminence

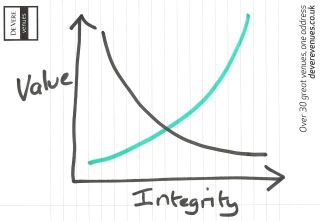

Those of us that work in the so called knowledge economy need to build and maintain, for want of a better word, our ’eminence’. Eminence is defined as being “a position of superiority, high rank or fame”. What I mean by eminence here is having a position which others look to for guidance, expertise or inspiration. You are known as someone who can offer a point of view or an opinion. A blog gives you that platform and also allows you to engage in the real world.

So, there you have it, my reasons for blogging. As a postscript to this I fortuitously came across this post as I was writing which adds some kind of perspective to the act of blogging. I suggest you give the post a read but here is a quote which gives a good summary:

…if you start blogging thinking that you’re well on your way to achieving Malcolm Gladwell’s career, you are setting yourself for disappointment. It will suck the enjoyment out of writing. Every completed post will be saddled with a lot of time staring at traffic stats that refuse to go up. It’s depressing.

I have to confess to doing the occasional bit of TSS (traffic stat staring) myself but at the same time have concluded there is no point in chasing the ratings as they might have said in more traditional broadcast media. If you want to blog, do it for its own sake and (some of) the reasons above, don’t do it because you think you will become famous and/or rich (though don’t entirely close the door to that possibility).